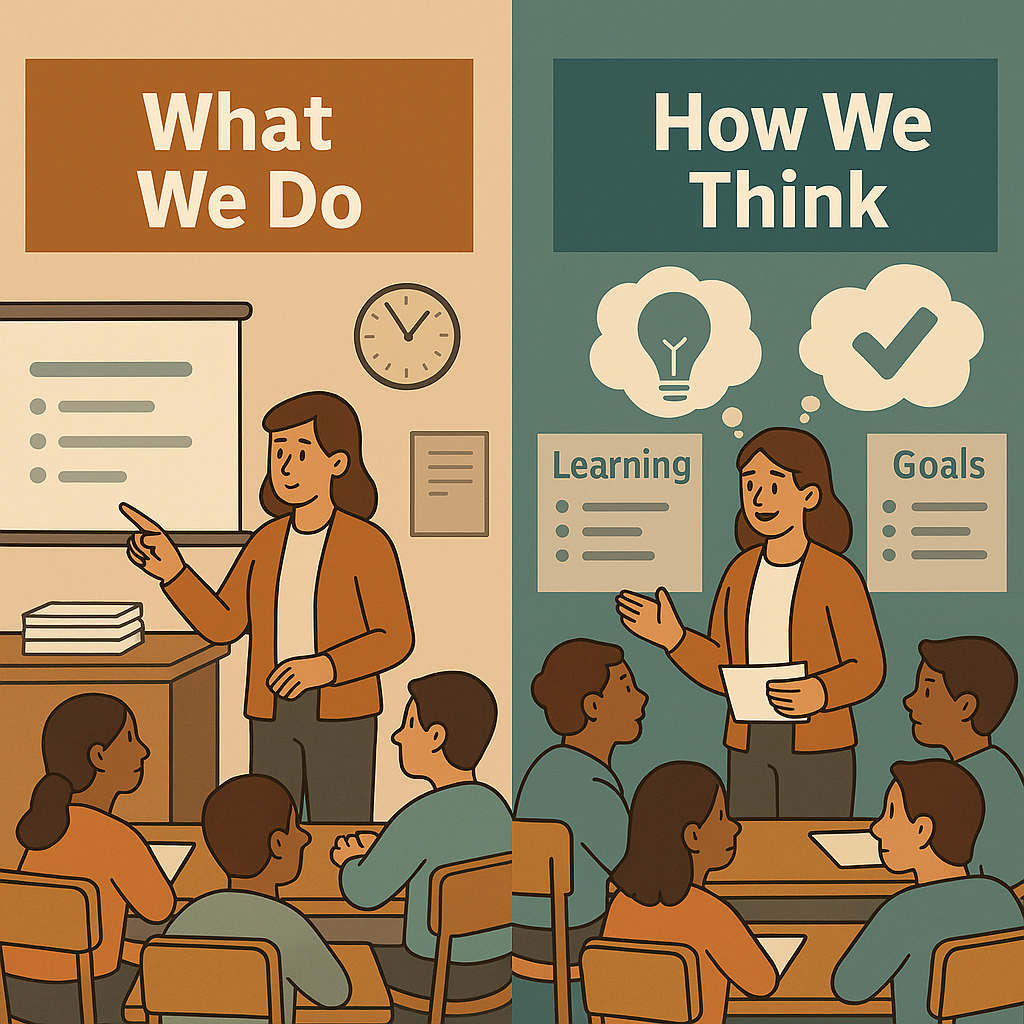

There was a time when I judged a day by my choreography. The mini lesson fit the plan, the slides clicked forward, the room felt orderly, and the stack of graded work on my desk grew smaller by the hour. I moved from piece to piece and called it progress. Then a quieter measure began to press on me. What I do matters, yet how I think about the effect of what I do matters more. The difference shows up not as a new bag of tricks, but as a new habit of mind that keeps learning at the center.

The “What We Do” Mindset

For much of the last century, the default pattern has looked like this. The teacher delivers input, the class completes an activity, and a grade appears at the end. It is clean and familiar. It promises coverage. It also tends to keep thinking passive. In this frame the question is what will I teach, and the plan that follows often emphasizes materials, timing, and compliance. Lessons run, assignments collect, and grades record. Learners move with the current because that is where the points live.

This mindset can produce moments that feel successful. A well structured lecture can simplify a complex idea. A tidy worksheet can give quick practice. A smooth discussion can calm a restless room. Yet the engine behind these wins is usually routine rather than evidence. When evidence appears, it often comes too late to change anything important. Black and Wiliam warned about this pattern a generation ago. They argued that assessment used only as a summary misses its power, while assessment used during learning raises achievement because it allows teachers and learners to act on information in time for it to matter. That conclusion rests on hundreds of studies and it still holds.

Even practices that look modern can settle into old grooves. Exit slips collected but never used create an illusion of feedback. Comments that travel with a grade tend to end the conversation rather than extend it. Large projects with detailed rubrics can drift into compliance if learners are not helped to notice their progress against clear criteria while the work is underway. In short, the what we do mindset can perfect performance while leaving learning uncertain. It is possible to cover everything on the pacing guide and still not see whether understanding has taken root.

The “How We Think” Mindset

A different story begins with clarity. Learners should be able to answer three questions at almost any moment. What am I learning? Why? How will I know I have it? Teacher clarity sits at a weighted mean effect size of 0.85, which places it over twice the common marker for a year of growth identified. The number signals what practice already suggests. When goals and success criteria are visible and usable, attention becomes sharper and effort becomes more productive.

Thinking for impact also treats evidence as part of the lesson rather than a ceremony at the end. Formative evaluation is more than a ritual. Fuchs and Fuchs found the average effect size of 0.70 when teachers systematically adjust instruction in response to what they see. Broader reviews show more modest averages near 0.40, which reminds us that the power lies in the decisions that follow, not just in the existence of a quiz or a ticket. When evidence guides the next move, achievement improves.

The same mindset invites learners to monitor and judge quality in ways that steer their own progress. Evaluation and reflection have an effect size of 0.75. In practice this looks like short routines in which current work is compared with shared criteria, a gap is named, and a next step is chosen. The routine is simple. The habit is powerful. Feedback belongs in this picture as a conversation with direction and points to stronger returns when comments target the task and the process rather than global judgments. When a note tells a learner exactly how to strengthen a claim with evidence and reasoning, the next draft often moves. When a number arrives first, thinking tends to stop. The small slice on comments without grades shows an average near zero point one nine, a reminder that judgment can overshadow guidance if we are not careful.

A final cross check comes from cognitive psychology. Dunlosky reviewed common learning techniques and found that practice testing and spaced practice outperform rereading and highlighting. The implication for classroom design is clear. Build opportunities to retrieve, compare, and revise so that thinking does the heavy lifting and memory has a chance to hold on.

The meaningful comparison is not between one strategy and another strategy. It is between two mindsets. One measures teaching by the smoothness of its performance. The other measures learning by the evidence of its progress. The first promises control. The second delivers growth. When our thinking shifts toward impact, plans change, talk changes, and the room changes with them. Coverage slows down just enough for learning to speed up. That is the quiet flip worth making, and it begins in the way we decide about what we do.